We Applied Critical Transitions Detection to Credit Spreads — The Simple Threshold Won

One of the more humbling results in our research came from what seemed like an obviously good idea: if critical transitions theory detects structural instability in equity returns, shouldn't it work even better on credit spreads?

Credit markets are where financial stress shows up first. Bond investors are lending money directly to companies — they have stronger incentives to price default risk accurately than equity investors, who are pricing growth expectations. When corporate health deteriorates, it typically shows up in credit spreads before it shows up in stock prices. The high-yield option-adjusted spread (HY OAS), published daily by the Federal Reserve, measures the gap between junk bond yields and Treasury yields. When that spread crosses its 90th historical percentile, bad things tend to follow.

We wanted to know: can we detect the credit market destabilizing before the spread physically crosses that threshold?

The Setup

We applied the same dual-channel detection framework we use on equity returns to daily changes in the high-yield credit spread. The logic was straightforward — before a credit spread blowout, you'd expect the daily spread changes to become "sticky" (each day's move starts predicting the next), more volatile (daily changes get larger), and asymmetric (more large upward moves than downward). These are textbook structural instability signatures, and there's a plausible mechanism for each in credit markets.

The data was clean. HY OAS is published daily on FRED, goes back to 1997. Nearly identical to our equity backtest window.

What We Found

The detection framework identified 86 alert-level transitions on the credit series. 93% of the 27 times HY OAS crossed its 90th percentile, our detection system had fired beforehand — with a median lead time of 166 calendar days.

That sounds impressive until you look at the hit rate. Of those 86 alerts, only 40.7% were followed by a significant equity drawdown within 90 days. The base rate for any random 90-day window producing a drawdown is 28.1%. So the lift was 12.6 percentage points — real but modest.

More importantly, 166 days of lead time is not actionable. Five and a half months of "credit markets may be destabilizing" is too early and too noisy. Most of those alerts resolved without consequence.

The Uncomfortable Comparison

The raw HY OAS level crossing its 90th historical percentile — a single data series, one threshold, no complexity science — produced a 63% hit rate on 27 signals. That's 34.9 percentage points above base rate.

Our detection framework applied to the same credit data: 40.7% hit rate. Applied only to signals that fired before the spread crossed 90th: 31.9% — barely distinguishable from random.

The simple threshold crushed the sophisticated detection system. Not by a small margin. By 23 percentage points.

Why Detection Theory Fails on Credit Spreads

Credit spreads are not equity returns. Equity returns are noisy, high-frequency, and granular — they generate the kind of rapid fluctuations that structural instability detection is designed to identify. Credit spreads are slow, persistent, and regime-like. They don't flutter and jitter on the way to a blowout — they grind steadily wider over weeks and months.

The detection framework looks for statistical precursors to transitions. But credit spread transitions don't have statistical precursors in the same way equity transitions do. The spread itself is already the integrated risk assessment of thousands of bond investors. By the time you can detect instability in the changes of the spread, the spread level has already moved enough to be informative on its own. You're adding a second derivative to a first derivative that's already telling you what you need to know.

There's also a structural issue. The detection system fired when VIX was at the 84th percentile (median) — meaning equity volatility was already elevated when credit instability registered. No timing advantage. The whole point of early detection is finding something before the market reprices it. On credit data, the detection system fires after both the credit and equity markets have already noticed.

The One Interesting Finding We Filed Away

Credit spread channel 1 — the stability channel applied to credit changes — produced a 51.9% hit rate on 27 signals. That's not far behind the raw 90th percentile threshold at 63%, with a different timing profile. It's detecting when credit spread volatility itself becomes unstable, which is a narrower and potentially more useful signal than general credit elevation.

At 27 signals over nearly 30 years, we can't trust the number. But if it holds in out-of-sample data over the next several years, it becomes a candidate for integration. We're watching it.

What We Actually Use Instead

Credit spread data appears in our system as context, not as a detection channel. When our equity detection engine fires an alert, the subscriber sees the current high-yield spread percentile alongside the classification. "Credit markets are currently at the 73rd percentile" next to a structural deterioration classification gives the subscriber useful information — it tells them whether credit markets agree with what the equity system is seeing.

That's a one-line contextual indicator, not a detection channel. It adds real value for the subscriber without pretending that adding complexity to credit data improves on the simple, powerful signal the raw spread already provides.

The Broader Lesson

Not every application of a valid methodology produces a valid result. Critical transitions theory detects structural instability in systems with rapid, noisy dynamics — equity returns, ecological populations, climate variables. Credit spreads don't have those dynamics. They're already a distilled risk measure computed from thousands of individual bond prices. Applying structural instability detection to a measure that's already measuring structural instability is redundant.

The hardest thing in quantitative research is accepting when a simple tool outperforms a sophisticated one. A free daily number from the Federal Reserve, with one percentile threshold, produces better drawdown prediction than months of detection framework development applied to the same data. We tested the idea rigorously, documented the result, and moved on.

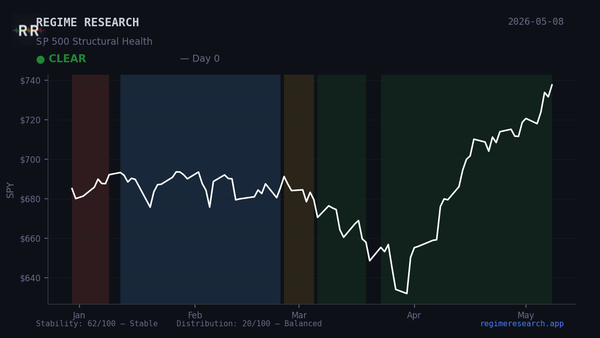

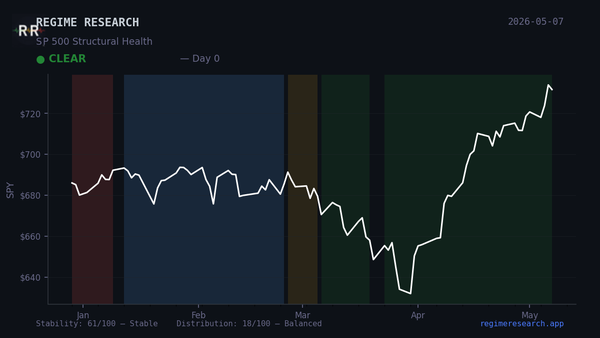

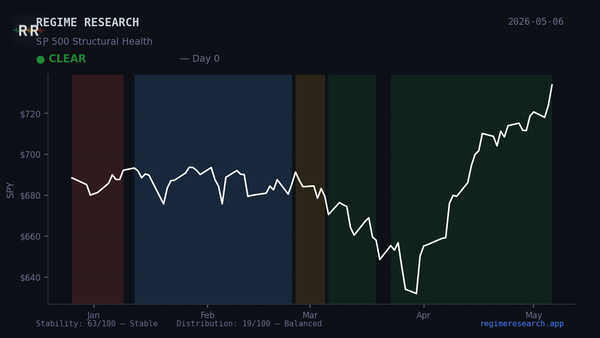

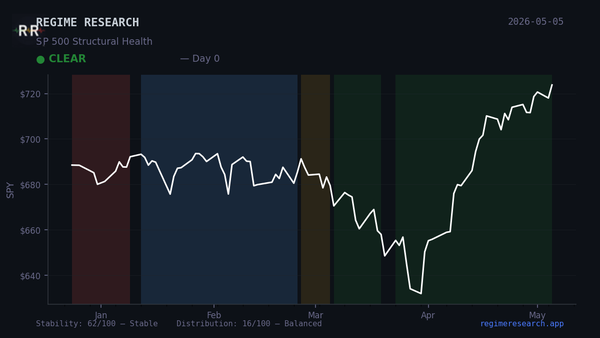

This post is part of an ongoing series documenting what we tested and rejected while building Regime Pulse — a structural health monitor for the S&P 500 built on critical transitions theory. We publish negative results because real research includes dead ends, and because showing what doesn't work is the only honest way to contextualize what does.

Regime Pulse provides scientific observations about structural market conditions for educational and informational purposes only. It does not provide investment advice. Past analysis is not indicative of future results.